Lisa Didn't Ship. Scorpio Does. Here's What I Learned Building an AI Orchestration System.

My day job is running a large travel advisor network. My nights, for the past several months, have been something different: building an AI orchestration system. I've built it twice now. The first version never successfully completed a full development run. This is the story of what I learned.

The Problem

If you've used Claude Code (or any other agentic coding tool) for anything non-trivial, you already know the wall. You start a session, get into the codebase, build momentum, and then you run out of context. New session. Re-explain everything. Lose the thread.

Or worse, you create a really well defined spec, launch an agent team and they either stall and run out of context or run off into unpredictable directions. For one task that's annoying. For a real feature across ten or fifteen tasks, it's completely unmanageable.

What I wanted was simple to describe and hard to build: a system that could decompose a software development project into phases, run each phase in its own fresh session, and manage all the handoffs automatically. No babysitting. No context dumps. Files as shared memory instead of conversation history. Then put it all in a nice, user-friendly GUI so novices wouldn't need to mess with the terminal too much. Sort of the direction Claude Co-Work and Codex Desktop app, but more task-focused. Think fun graphic and chat-based feature interviews, kanban board interface to visualize work, etc.

I wanted to be able to define a project objective, detail the requirements and user stories, then let 'er rip and watch the show.

The Inspiration

In late 2025 I started following Jeffrey Emmanuel (https://x.com/doodlestein). At the time he'd published Agent Mail, a structured approach for agent sessions to pass information between each other. I'd been wrestling with this exact problem and struggling to get markdown-based comms to work reliably. Agent Mail was the first thing I'd seen that offered a real framework for inter-session communication.

Around the same time, Geoffrey Huntley's Ralph Wiggum Loop (https://ghuntley.com/ralph/) was entering the broader AI conversation: a Bash loop that puts Claude Code on a "night shift," iterating on a task with fresh context each pass until it's done. The codebase itself is the persistent state. I never used the loop directly, but the ideas in the air were unmistakable: session isolation, file-based state, autonomous iteration.

Jeffrey has since built out his broader Agentic Coding Flywheel. In some ways we've been building toward similar goals in parallel. He's more prolific in terms of projects (and public output), at least so far.

These ideas gave me the framework I needed. I started building.

Lisa: Attempt One

I'm a huge Simpsons fan, so it seemed obvious to me to name my first effort Lisa, a much smarter and more competent version of Ralph. Lisa was beautiful. A TypeScript and Bash hybrid, PostgreSQL for persistence, 17-page React UI, three defined agent roles (Project Manager/System Architect, Developer, and QA) with structured mailboxes, real-time streaming, and state machines.

The UI was genuinely beautiful.

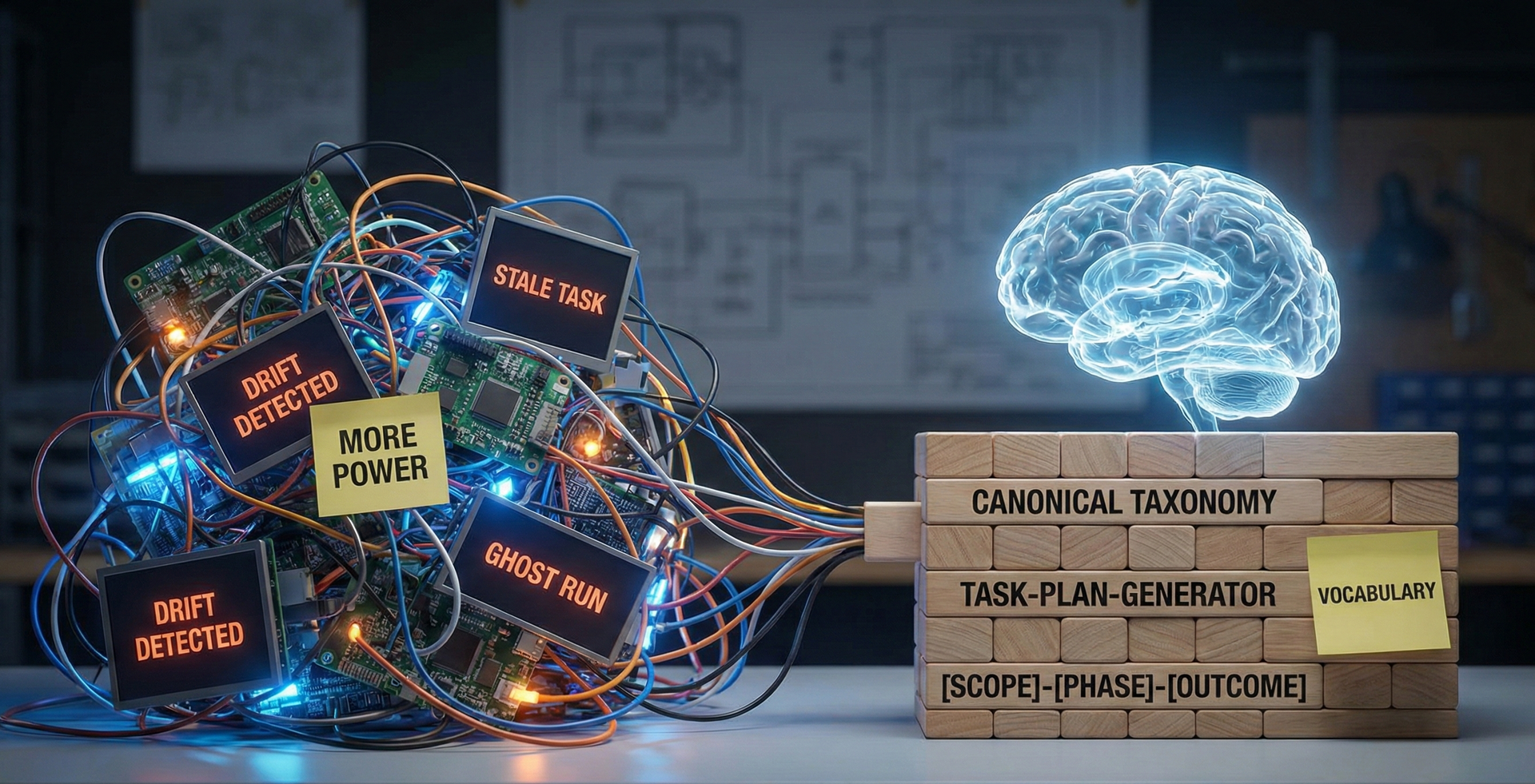

Lisa never completed a successful end-to-end development run for anything significant. The loop logic was buggy and inconsistent. Lisa's failures (well, my failures I suppose) were a great lesson in what doesn't work. While Lisa's UI was beautiful up front, under the hood it was a mess and the outputs didn't work.

Slop. But not slop because the code was bad. The deeper problem was architectural: I gave the agents roles without giving them defined behavior. A Developer agent building a login form received the same base prompt as a Developer agent refactoring a database schema. Role without specificity doesn't shape behavior and guide ouput, it's just a label.

The agent had no idea how to do the work, only what kind of worker it was supposed to be. It would be like walking up to a ballerina and saying, "You're an expert tennis player." Mmmm, okay, sure.

The UI was fighting against itself too. Seventeen pages with buttons for steps that should have just happened automatically. I kept hearing my own voice saying: I just wish the thing would work.

So I scrapped it. Lisa was killed off.

Scorpio: From Scratch

I still wanted to play on the Simpsons-inspired Ralph wave, and if Lisa Simpson is the smartest character in the Simpsons universe, Hank Scorpio might be the most effective. He appears in exactly one episode: Season 8's "You Only Move Twice," voiced by Albert Brooks. Charming CEO of Globex Corporation, excellent benefits, genuinely supportive of his team, casually attempting world domination. He doesn't overthink the architecture. He just gets things done.

I liked that energy for what I was building next.

Scorpio is Bash-first, file-system state, no infrastructure dependencies. The same core idea: orchestrate independent AI sessions through a phased pipeline, but with one critical architectural shift: skills instead of roles. There is a UI, or will be, but Scorpio's power is it just gets to the heart of the matter and throws a flamethrower at it. Watch that episode if you don't get the reference.

Skills are self-contained markdown definitions. Each one specifies exactly what an agent should do, how it should do it, and what constraints apply, per task type. A dev-task-runner skill behaves completely differently than a bug-investigator skill, which behaves differently than a qa-reviewer. The orchestrator loads the skill registry, matches skills to tasks, and injects the right definition into each session's prompt.

Task-specific behavior, not generic labels.

The rest is built around one principle: files over context. Every session reads from disk, writes to disk, and passes to the next session through structured files. Disk is unlimited. Context windows are not. This isn't a workaround, it's the architecture!

The pipeline runs in phases: discover → plan → build → validate → release → learn. Each session writes one of three results:

- Success

- Failed, or

- Needs Decision

When a session hits an ambiguity it can't resolve, it flags it and a separate "thinker" session analyzes the problem and can retry with a modified prompt. The system self-heals without you. It works until it figures it out, meets acceptance criteria, and passes tests.

Scorpio today: ~3,200 lines of Bash, 70+ integration tests passing, 20+ skills covering the full lifecycle, phase-based execution with dependency ordering and lock-aware parallel sessions, and support for Claude, Codex, and Gemini. It has successfully built itself and a few other projects I'm working on.

My Core Learning

Here's what I keep coming back to after all of this:

- LLMs can do almost anything, if the task is finite enough to define success as a boolean.

- Yes it's done or No it's not. 1 or 0. Binary. (I'm aware of how nerdy that is.)

- Every other decision, like skill definitions, session boundaries, handoff protocol, thinker pattern, and file-based state all flow from that constraint. If you can't state what "done" looks like before the agent starts, the loop will run until it times out and you won't know why. Getting orchestration right is getting decomposition right. Break work into pieces where done is unambiguous. The rest is iteration and perseverance.

Getting all the pieces to work together in harmony, that's literally what orchestration means. It turns out the word is exactly right.

What's Next

The UI is still in development. The core framework is solid. I'm exploring whether there's a commercial application here, and I'm building in public to find out. I hope to speak and write more about this as I speak to groups, conferences, through this blog and on socials.

This is the first post in that series.

If you're building something similar, or even just thinking about it, I'd genuinely like to hear what you're working on. All of this is so brand new and every day, literally, there are five new things to learn. No one can learn, see, do, test it all. It is an exciting, terrifying time to be alive. So what's the best thing to do in that situation? Grab a flamethrower...